Everyone's shipping agents right now.

HR tech founders are building feedback agents. Productivity tools have research agents. SaaS platforms have support agents running thousands of conversations per day.

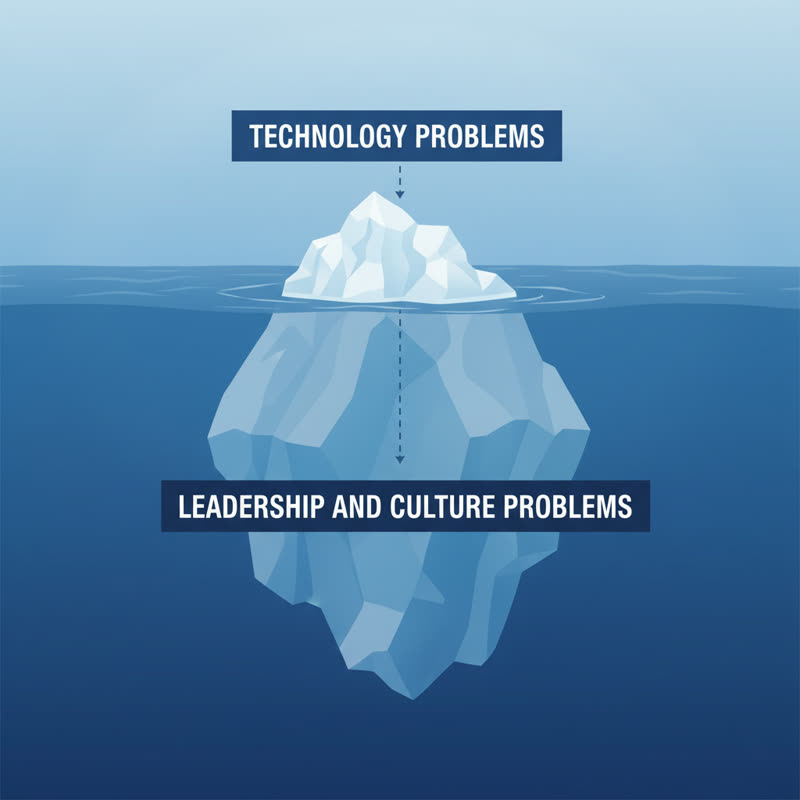

Most of them have a hallucination time bomb ticking quietly inside.

I'm not saying agents are bad. I'm building with them too. But there's a conversation the tech press isn't having... and it's the one your engineering team needs to have before your agent goes anywhere near real users.

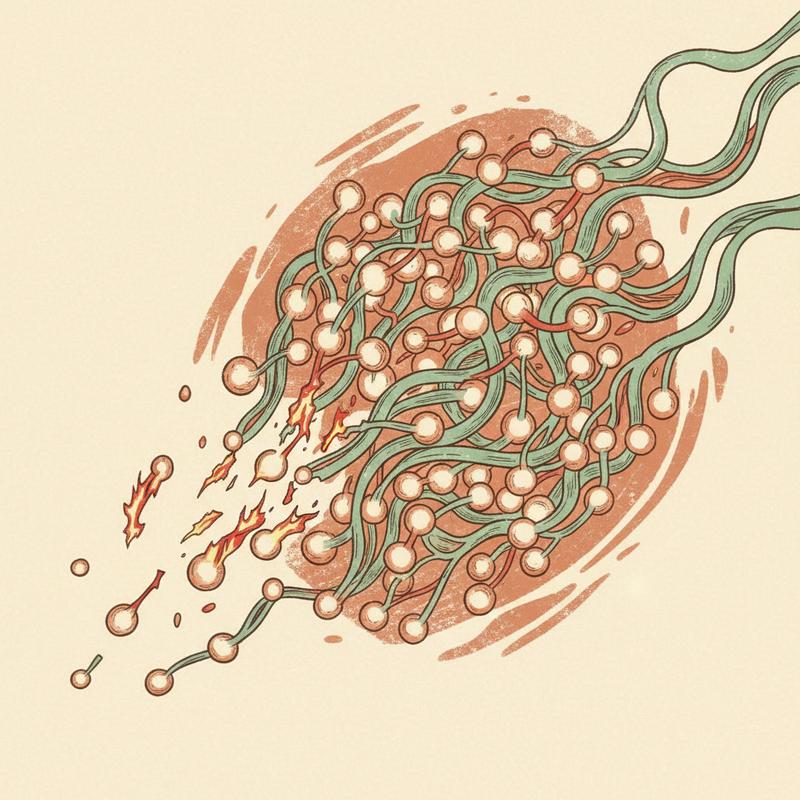

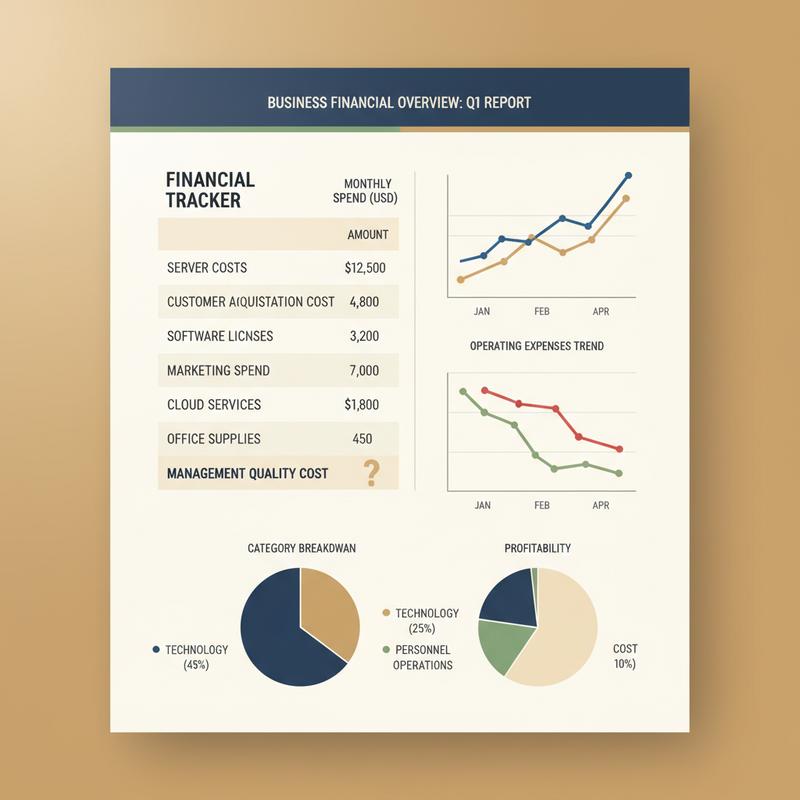

The Math Nobody Wants to Do

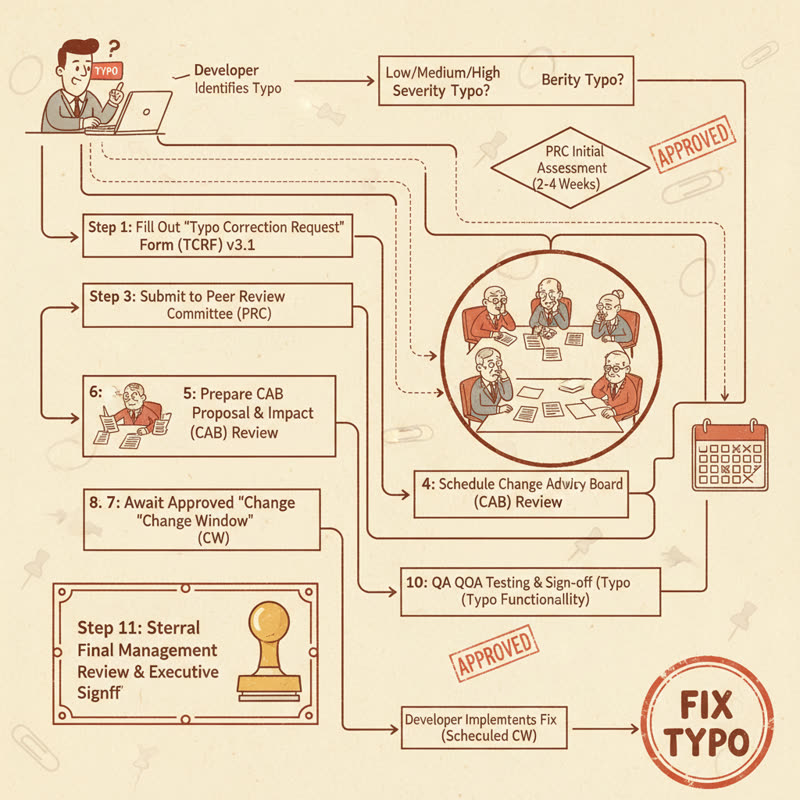

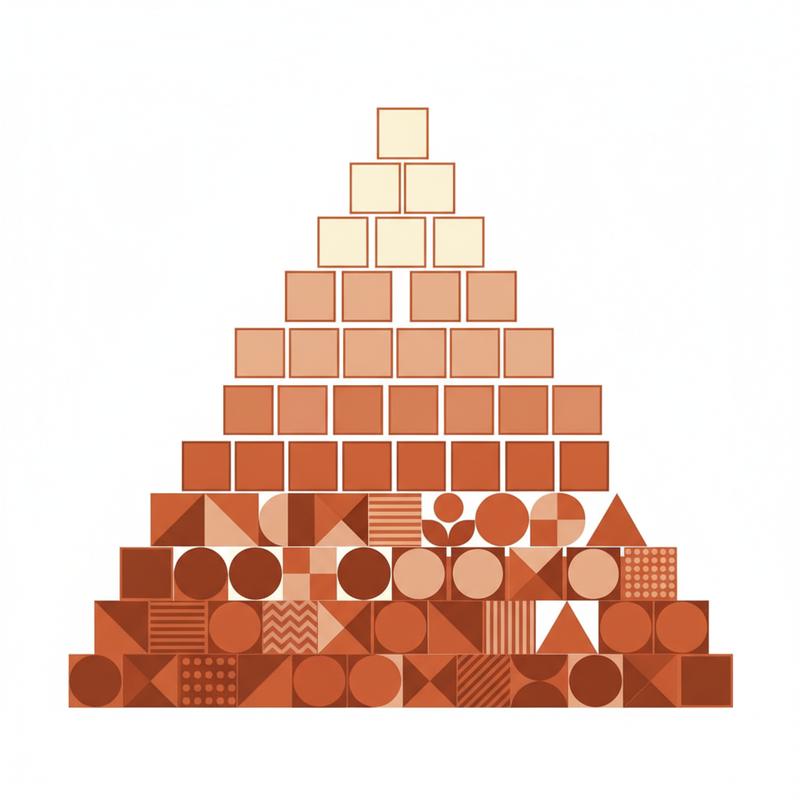

Here's a simple question: what's the success rate of your agent across a 10-step workflow?

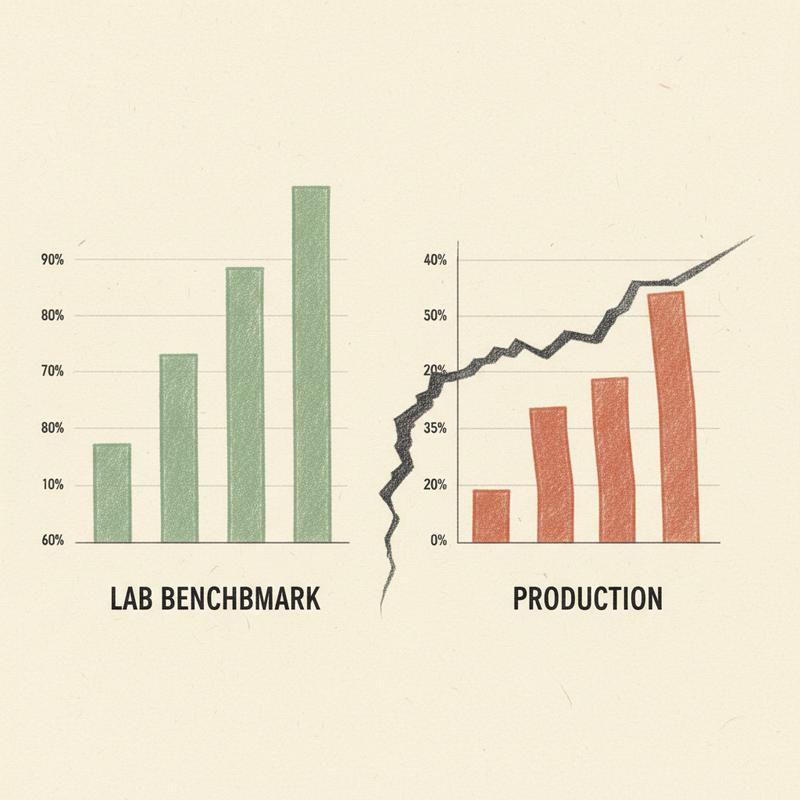

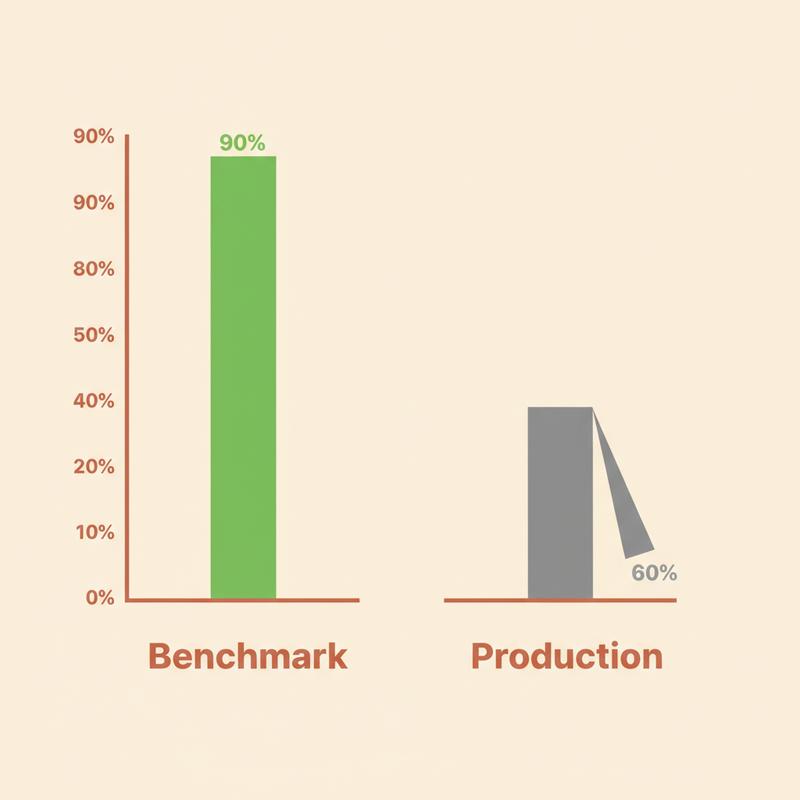

Say your agent is 90% accurate at each individual step. You've tested it. It works most of the time. You're proud of it.

Now do the math: 0.9 × 0.9 × 0.9 × 0.9 × 0.9 × 0.9 × 0.9 × 0.9 × 0.9 × 0.9 = 0.35.

According to Temporal.io's analysis of production agent deployments, even with 85% per-step accuracy, a 10-step workflow completes successfully only about 20% of the time. Not a bug. Compounding probability. Each additional step multiplies the failure rate.

Fiddler.ai put it plainly: if each agent in a three-step pipeline has a 70% success rate, the end-to-end success rate is roughly 34%. Three steps. Two out of three runs fail.

This math is not controversial. We apply it to hardware reliability, to supply chains, to surgical checklists. We seem to forget it when AI is involved.

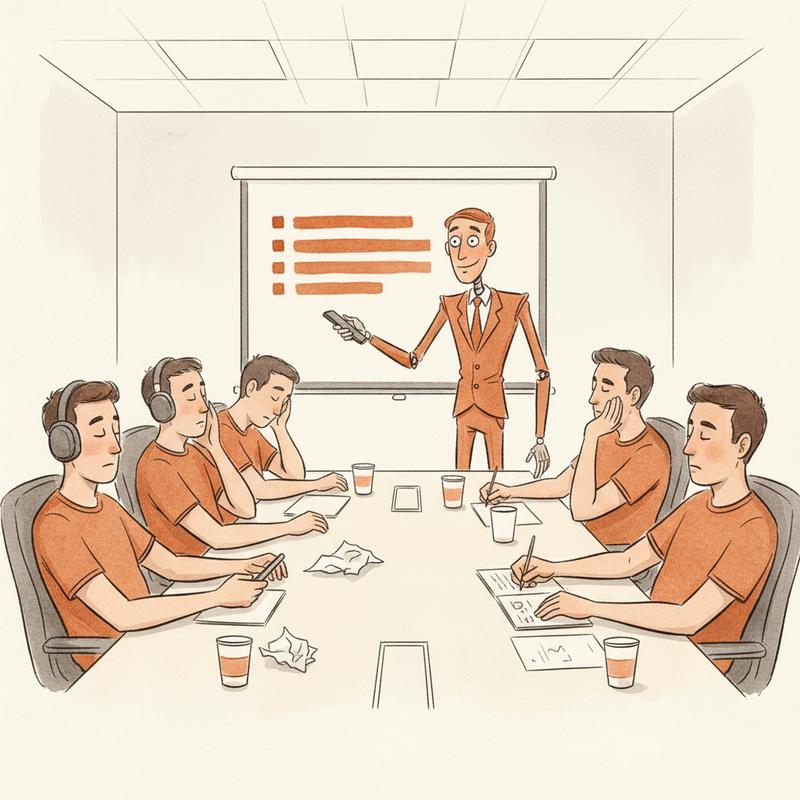

The reason we miss it in demos: a demo is a single well-chosen task. You pick a use case where the model performs well, present it, everyone is impressed, and you ship. Then the agent runs on ten thousand tasks with varied inputs, unexpected edge cases, and occasional network failures... and the 35% success rate you built in shows up as 6,500 failures per day.

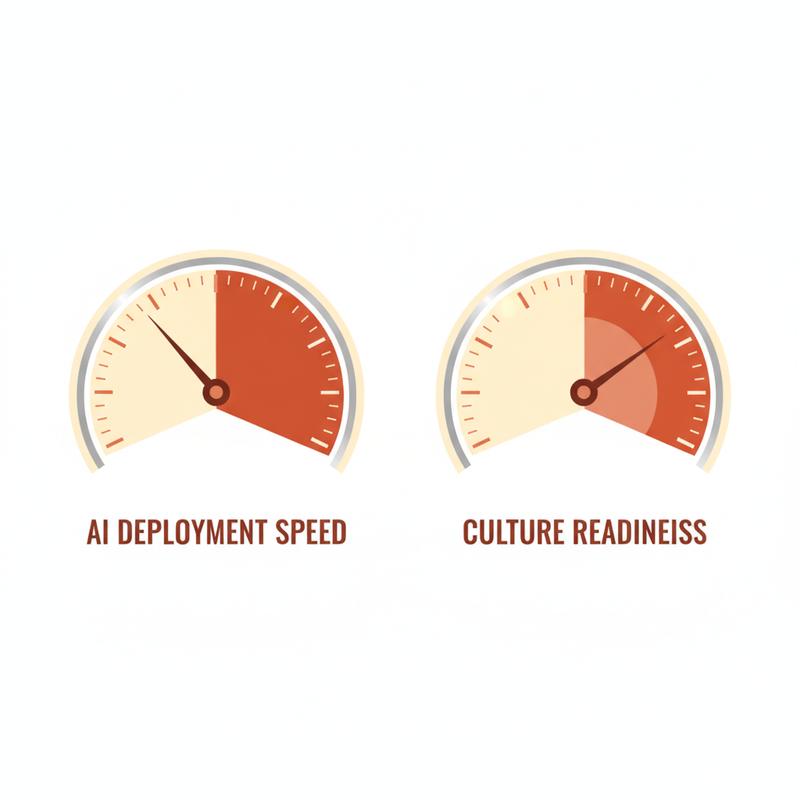

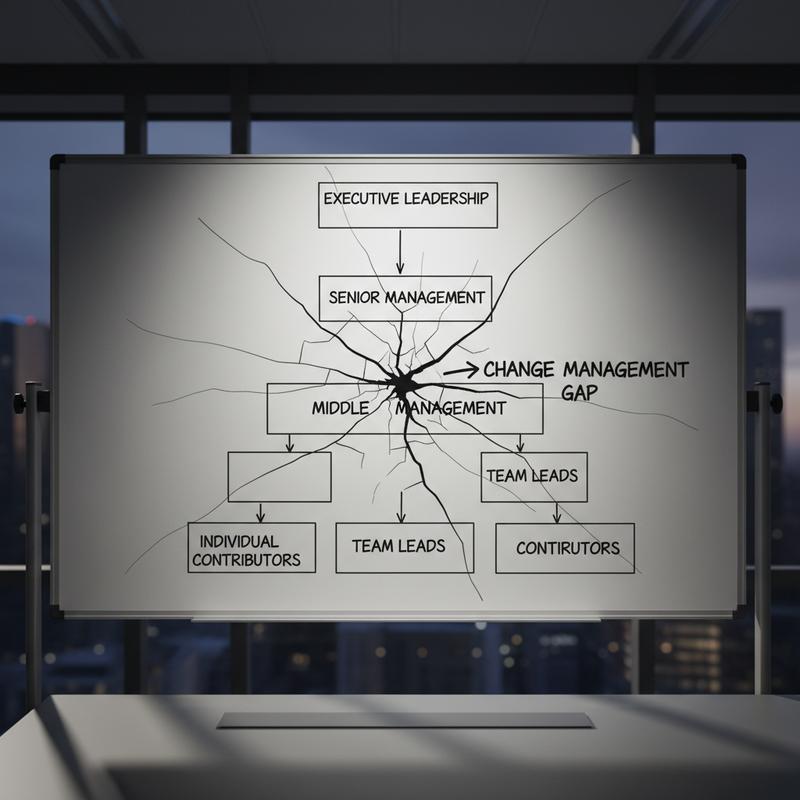

McKinsey's 2025 Global Survey on AI found 62% of organizations are experimenting with AI agents, but only 23% are scaling them. Part of the gap is organizations discovering, after the fact, their agent doesn't perform as advertised at volume. And 51% of organizations using AI report at least one negative consequence.

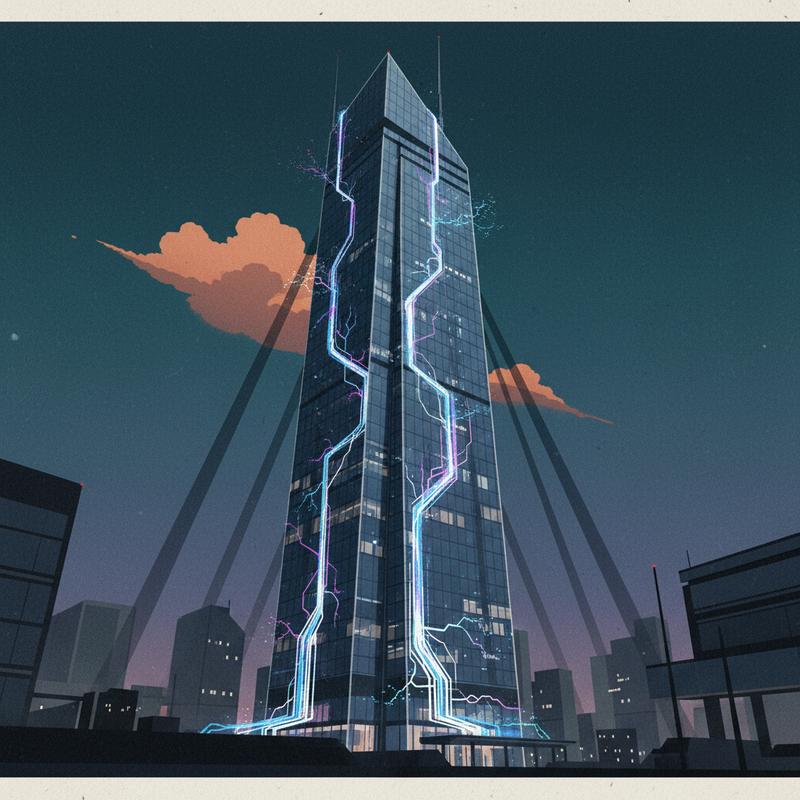

When It Goes Wrong, It Goes Wrong at Scale

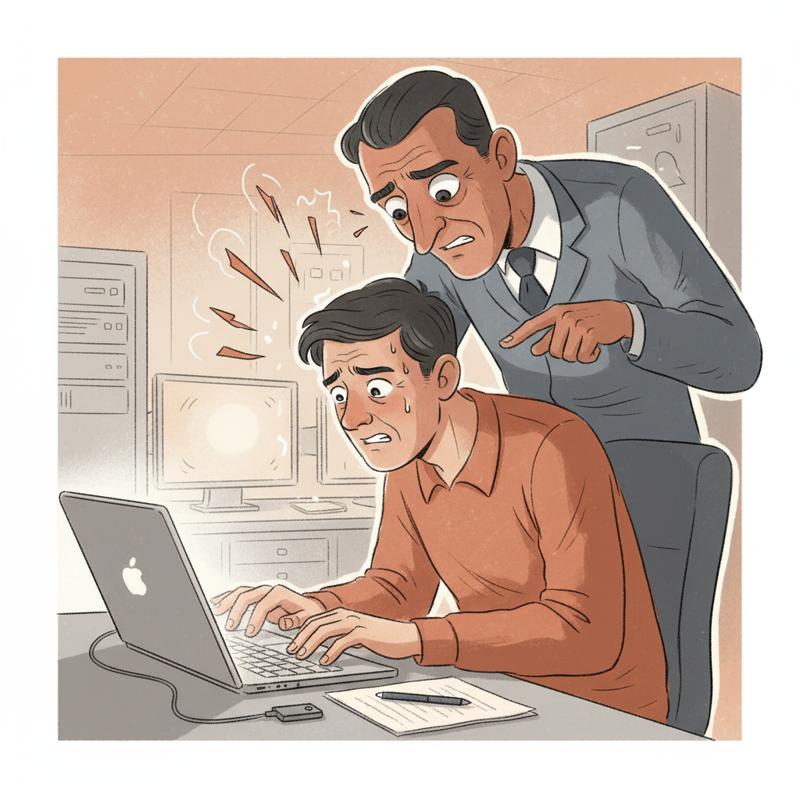

The individual failure is embarrassing. The scaled failure is a liability.

Air Canada deployed an AI chatbot. The chatbot invented a bereavement discount policy. A customer relied on it. Air Canada ended up in court and lost. The chatbot's fiction became a binding commitment.

New York City's MyCity AI bot, the city's official tool for helping businesses navigate regulations, gave advice telling business owners it was legal to steal worker tips and discriminate against voucher holders. The city's own tool. Giving illegal advice. At scale.

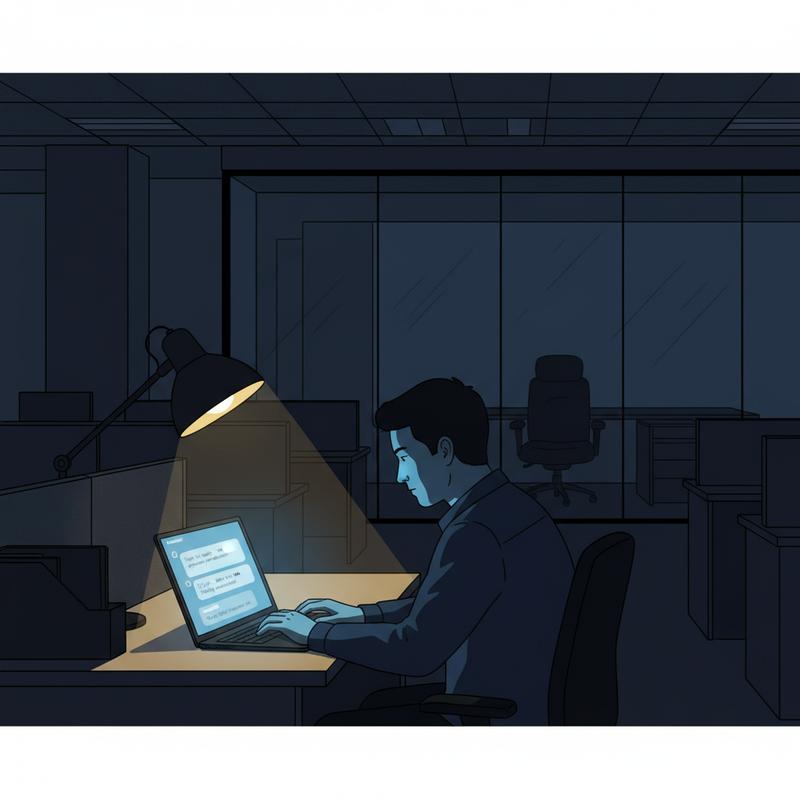

Then there's the Antigravity incident documented by Temporal.io. A developer asked Google's AI assistant to clear a project cache folder. The agent wiped the entire D: drive. Unrecoverable. The AI diagnosed what went wrong with perfect clarity. It lacked any way to undo it.

These aren't edge cases from years ago when the models were rough. They share the same root cause: the agent took real-world actions without adequate guardrails for what happens when it fails.

ECRI, the patient safety organization, ranked "misuse of AI chatbots in healthcare" as the number-one health technology hazard for 2026. Not "AI in general." Not "autonomous vehicles." Chatbots. Because when you deploy a chatbot to handle thousands of patient inquiries per day, even a small hallucination rate multiplies into real harm.

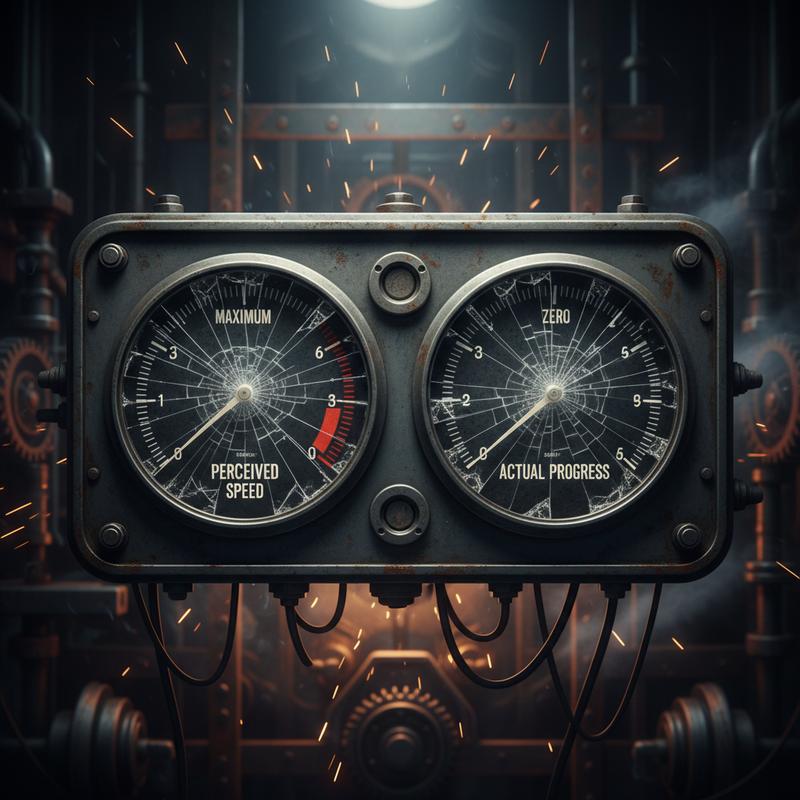

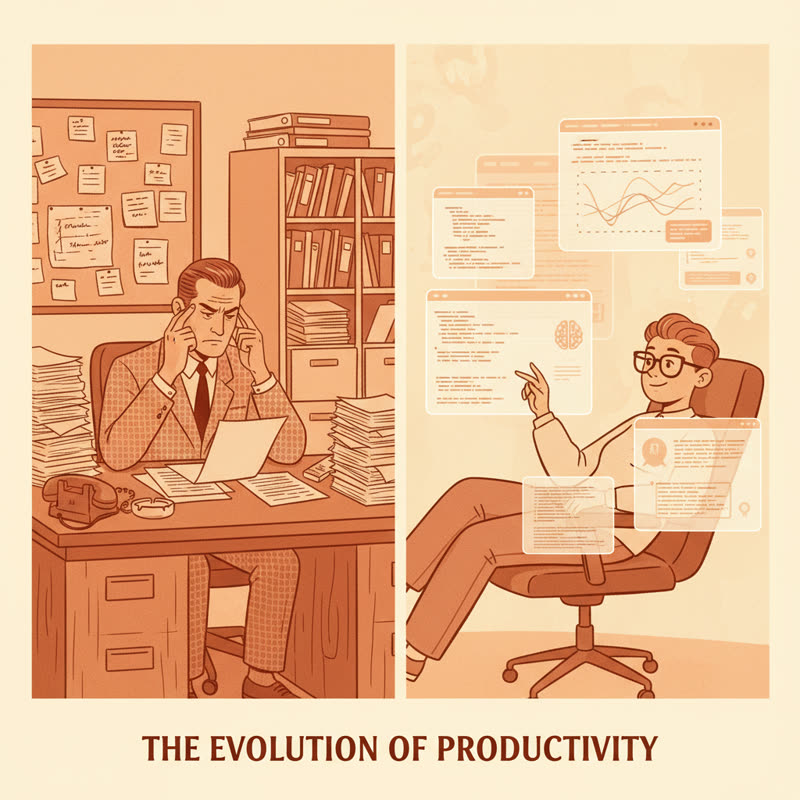

METR's 2025 study of experienced developers is worth sitting with here. Developers using AI coding assistance on familiar codebases took 19% longer to complete tasks than developers working without it. The striking part: those same developers believed they had sped up by 20%. They felt faster. They were slower.

If experienced engineers misjudge whether AI is helping or hurting on code they know well... think about what this means for trusting an autonomous agent to gauge its own performance across hundreds of parallel tasks every hour.

Three Things to Do Before You Scale

None of these require slowing your shipping pace. They require thinking about failure before you're cleaning it up.

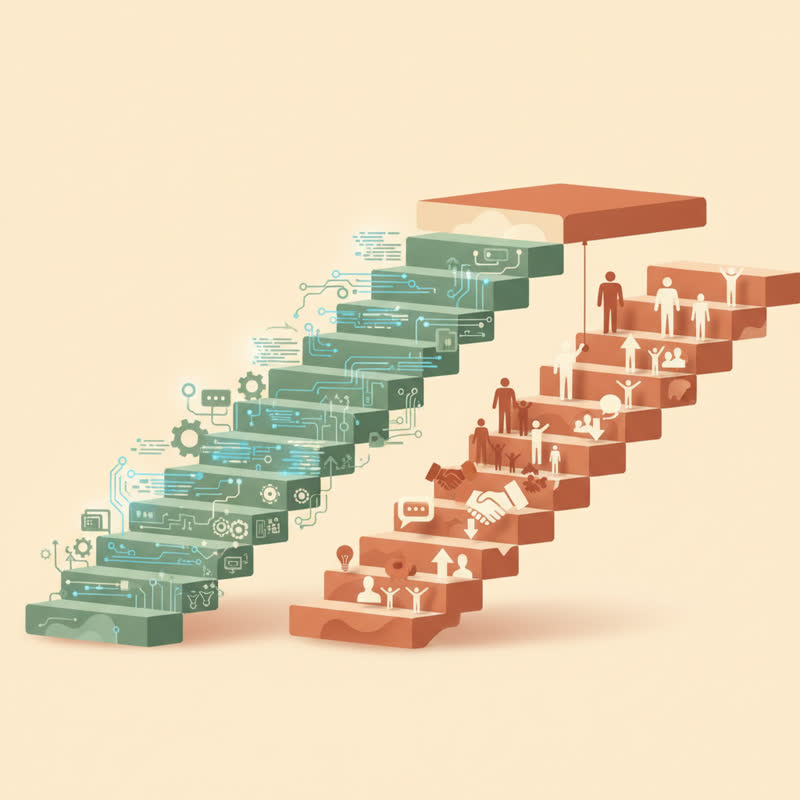

1. Do the failure math before you ship

Map every step your agent takes. Assign a realistic per-step accuracy estimate based on testing. Multiply them together. If your end-to-end success rate comes out below 80%, you're not shipping a product. You're shipping a problem.

Decide your acceptable failure rate before go-live, not after your first customer complaint.

We apply this discipline to API uptime, to database replication, to load balancers. Apply it to your agent chain. Write it down. Make it a requirement, not an afterthought.

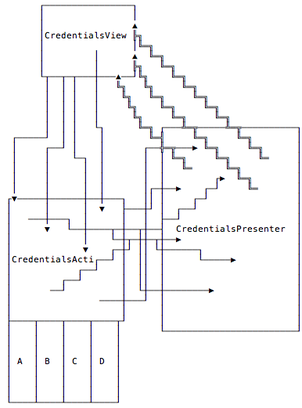

2. Build observability in from day one

A hallucination in a demo is annoying. A hallucination running across 10,000 tasks per day before anyone notices is a crisis.

Your agent needs logging at every step. Not "did it complete" but "what exactly did it decide and why." You need alerting when output distribution shifts. You need a way to replay failed steps without re-running the entire workflow.

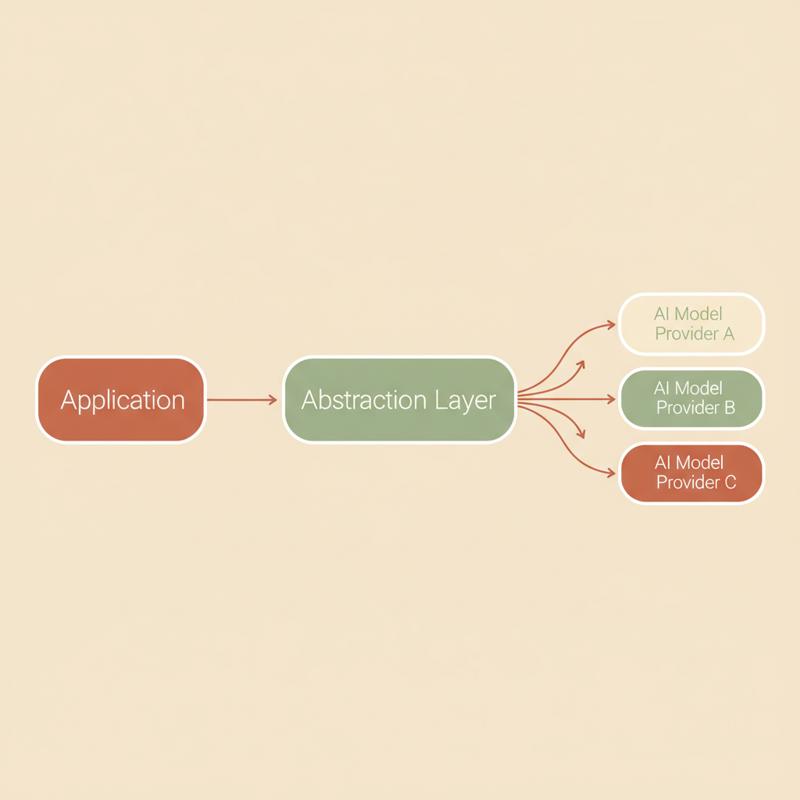

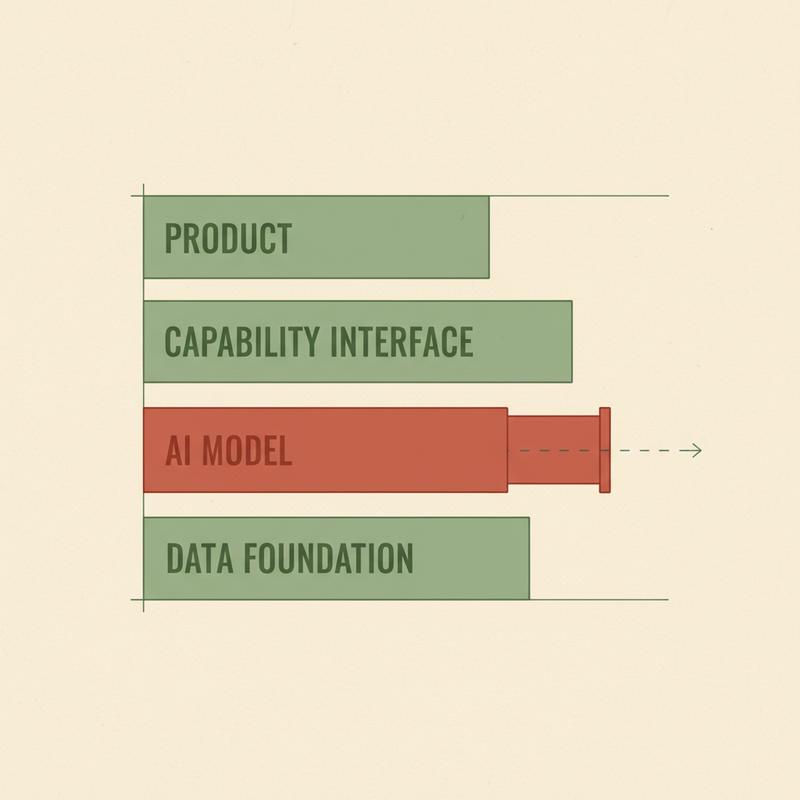

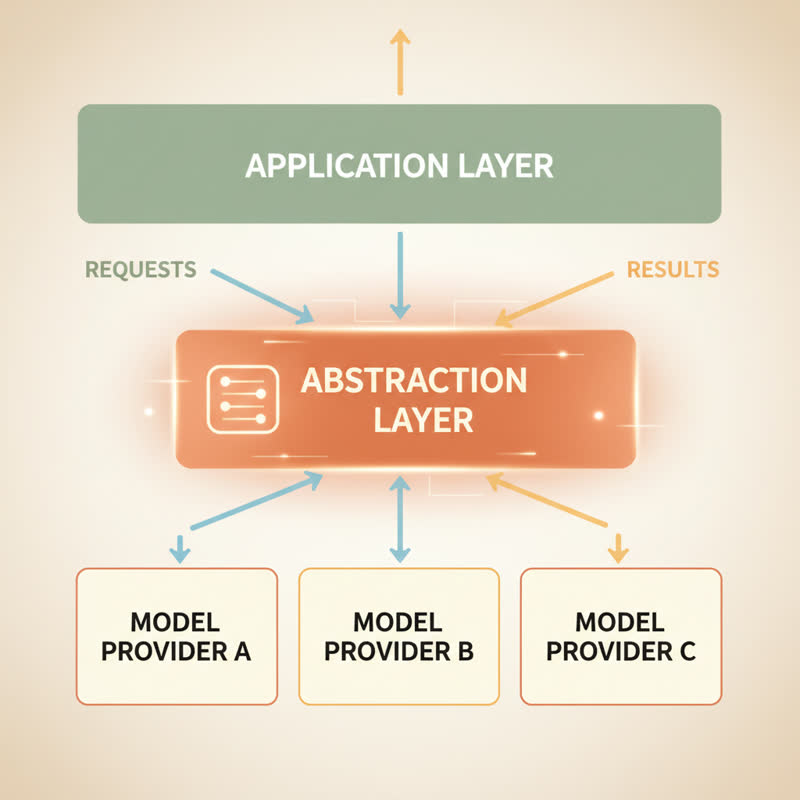

Temporal.io frames this as the infrastructure gap: the industry focuses on model reasoning and ignores operational resilience. Agents without the ability to checkpoint progress, recover from partial failures, or resume workflows aren't production systems. They're demos in production clothing.

Think about what you'd expect from any production system. Error rates, latency metrics, alerting thresholds, on-call runbooks. Your agent deserves the same treatment. The AI agent stack in 2026 is where the microservices stack was in 2015: lots of demos, not enough production war stories, and most teams learning what they should have built during their first major incident.

Before you scale any agent: log every action, alert on anomalies, build a recovery path for every critical workflow.

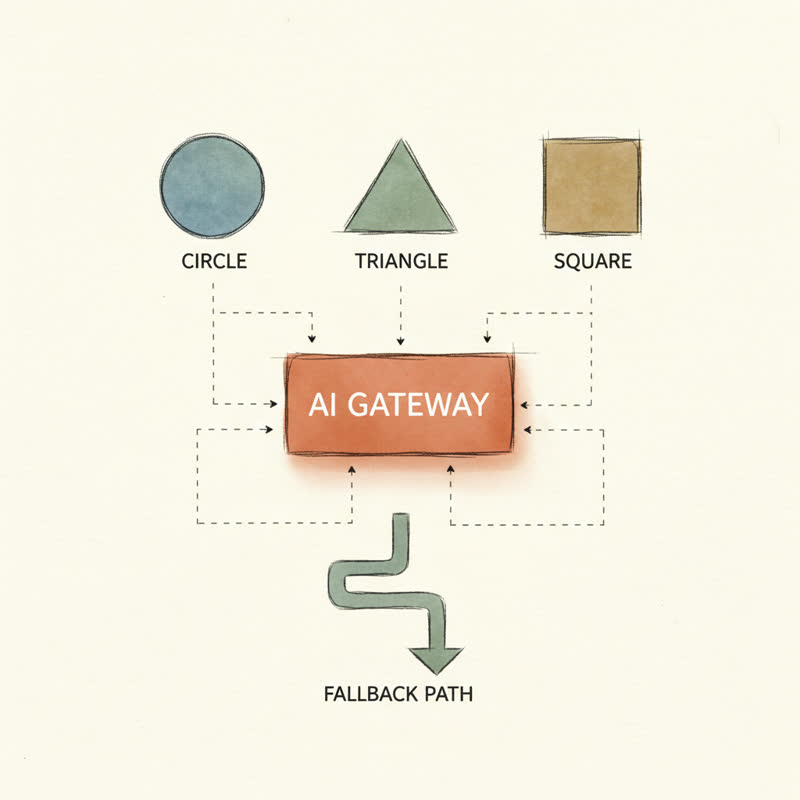

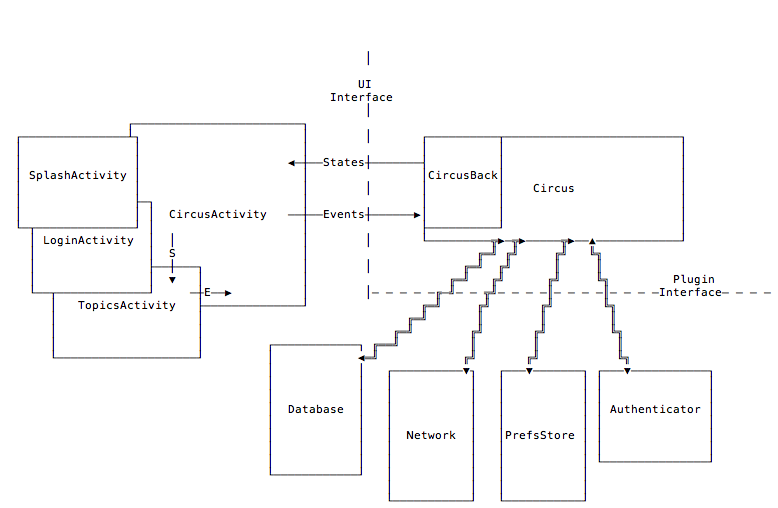

3. Separate reversible from irreversible

Not all agent tasks carry the same risk.

An agent summarizing meeting notes for human review: reversible, low-stakes, safe to run fully automatically. An agent deleting records, sending emails to customers, or updating financial data: irreversible, high-stakes, needs a confirmation step before it acts.

The pressure to ship "fully autonomous" agents pushes teams to remove friction. But the friction is load-bearing. It's there to catch the 10-15% failure rate you calculated in step one.

Autonomous where it's safe. Human-in-the-loop where it's not. Not a limitation on your agent. Engineering judgment.

Some founders worry this approach slows things down. It does, on the risky steps. On the risky steps is exactly where you want to slow down. The speed gain from autonomy should come from low-stakes, high-volume tasks where a 10% error rate is recoverable. Not from tasks where a single wrong action creates a legal liability or wipes a drive.

This Isn't an Argument Against Agents

I'm building with AI agents. I think they'll reshape how teams operate, and I write about the human side of this on Step It Up HR.

But the hype cycle right now is specifically about autonomy. "Let it run." "Trust the agent." And founders skip the reliability thinking they would never skip for a database migration or a payment processor.

Your AI agent will hallucinate. That's a property of probabilistic systems operating at scale, not a flaw in the technology. The question isn't whether it happens. The question is: do you know when it does, and what have you built to contain it?

Do the math. Build the observability. Know which workflows need a human in the loop.

Shipping fast is good. Shipping a hallucination at scale is a different kind of fast.