Every week I talk to leaders who say the right things. AI agents are on the roadmap. The budget is allocated. The pilot is done. But the rollout is stuck.

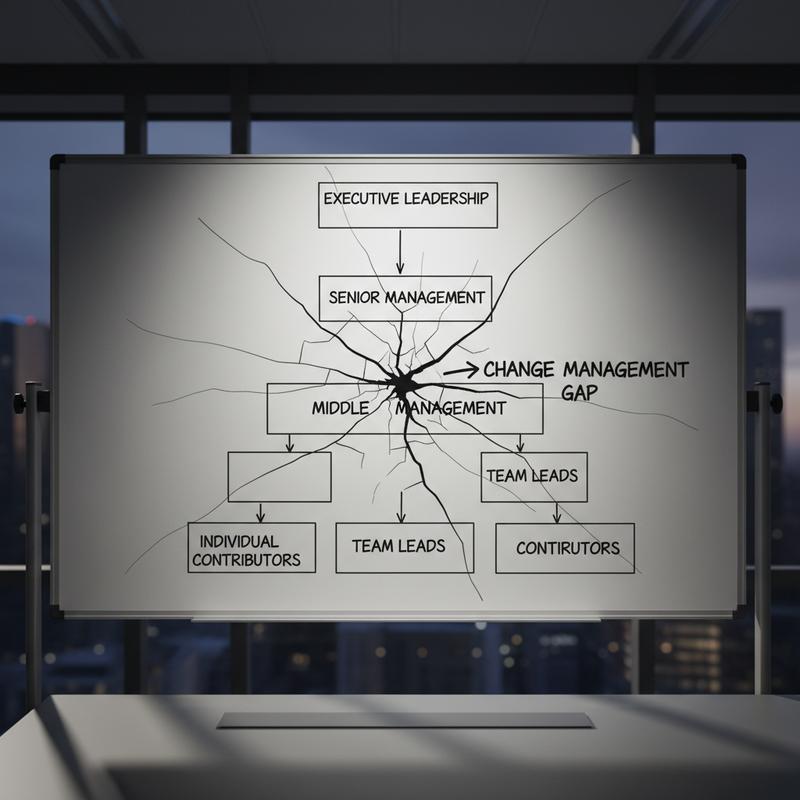

They blame the tech. The integrations are complex. The data is messy. The tools keep changing. All of this is true to some extent. But here is what the data shows: the number one barrier to AI agent adoption at scale is change management. And change management is a leadership problem, not a technology problem.

The Numbers Every Leader Needs to Face

According to Anthropic's 2026 State of AI Agents Report, the top three barriers to scaling AI agents across organizations are:

- Integration challenges: 46%

- Data quality requirements: 42%

- Change management needs: 39%

Integration and data are real engineering problems. But change management? Change management is not an IT ticket. Change management is a leadership failure.

And it gets worse. McKinsey's Superagency in the Workplace report found C-suite leaders are more than twice as likely to cite employee readiness as the barrier to AI adoption than to examine their own role in it. Meanwhile, the employees they're blaming? Already using AI at three times the rate leaders think.

Sit with it. Employees are using AI three times more than their leaders estimate. And leaders are pointing at employees as the problem.

The Patterns I Keep Seeing

I've been in and around technology leadership for a long time. The patterns of failure in AI adoption are not new. I've watched the same film before -- with ERP rollouts, with agile transformations, with cloud migrations.

Here is what leadership failure looks like in practice.

The manager who doesn't use the tool. You do not lead your team through a change you refuse to participate in. If you're asking your people to adopt AI agents into their workflows but you personally haven't opened the tool in a week, your team sees through you immediately. You're not a sponsor of this transformation. You're a passenger.

The rollout without a why. Someone above you said deploy AI agents. You handed it to a project manager. The project manager handed it to IT. IT deployed it. Nobody told the team why it matters, what problem it solves, or what success looks like. This is how you get a 32% stall rate after pilot -- which is exactly what research into AI agent deployments found. One in three companies never gets past the proof of concept because nobody made the case for change.

Measuring the wrong thing. You added AI to an existing process and measured whether the AI performed the old steps. AI agents are not a faster typewriter. If you're retrofitting them onto a broken process, you'll get a broken process with an AI in it. The BCG/MIT Sloan 2025 Agentic Enterprise report identifies this as one of four critical tensions leaders face: process retrofitting versus reimagining. Most leaders choose retrofitting because reimagining requires courage.

The Leadership Blindspot Is Real

The McKinsey finding about C-suite leaders blaming employees is not a one-off. It's a pattern.

When I look at the 99.5% of people who have had at least one bad boss in their career, the failure mode is almost always the same: the leader exempted themselves from the standards they set for others. They talked about accountability and avoided it themselves. They talked about growth and stopped learning. They talked about change and resisted it.

AI adoption is the same story playing out with a new cast.

Right now on Reddit, people are watching AI agents book flights, compare prices, and manage their calendars -- and the conversation oscillates between amazement and deep unease. The technology is working. The employees experimenting with it at home, on their own time, are not the problem. What they're waiting for is a leader who believes in it enough to model it.

This is on you.

The Four Decisions Leaders Are Avoiding

BCG and MIT Sloan identified four tensions defining whether organizations succeed with agentic AI. Every single one is a leadership decision, not a technical decision:

Scalability versus adaptability. Do you build fast or build flexible? There's no right answer -- but someone has to make the call and own it.

Investment versus employment. If AI agents absorb 30% of a team's work, do you cut headcount, redeploy people, or do both? Waiting for the answer to appear is not a strategy.

Supervision versus autonomy. How much do you trust the agents? How much oversight is required? This requires someone to decide what acceptable risk looks like in your organization.

Process retrofitting versus reimagining. Do you make AI fit your current process or redesign the process around AI's strengths? This is the biggest one, and most leaders dodge it entirely.

These aren't API configuration questions. They're strategy, governance, and culture questions. They sit on the leader's desk. Nobody else gets to answer them.

What Good AI Leadership Looks Like

I'm not going to pretend this is easy. It's not. The pace of change in AI tooling is relentless. Models update weekly. New agents appear before you've finished evaluating the last ones. The pressure to show ROI before you've built genuine competence is real.

But here is what I've seen work.

Use the tools yourself. Every day. Not as a demo. Not to show your team. As a genuine part of your own workflow. Ask an AI agent to help you prepare for your next board meeting. Have it summarize your week's worth of emails. Use it to draft your next strategy update. You'll understand the limitations, the prompting discipline required, and where it genuinely saves time. You don't lead something you haven't lived.

Fix the process before you add AI to it. If a process is broken, AI will do the broken thing faster. Before any AI deployment, ask your team: what problem are we solving? If the answer is "we're told to use AI," start over. The answer has to be a real bottleneck, a real pain point, a real inefficiency. Fix it first. Then introduce AI into the fixed process.

Be honest about what scares you. Some leaders resist AI because they're worried it will expose gaps in their own thinking. Some worry about job displacement -- including their own. These are legitimate fears. Name them. Teams respect honesty far more than false confidence. "I'm figuring this out alongside you" is a stronger leadership statement than a polished rollout deck.

Give your team the mandate to experiment. Not permission -- mandate. Set aside time. Protect it from other work. The 62% of enterprises without a clear starting point are stuck not because they lack tools but because nobody gave the team structured space to experiment safely.

The Tech Is Ready. Are You?

35% of companies are already deploying agentic AI, and 44% plan to follow. The models are good enough. The tooling is mature enough. The business cases exist.

What isn't ready in most organizations is the leadership layer above the technology.

If your AI rollout is stuck in pilot hell, if your team is unconvinced, if the ROI projections are not landing -- don't blame the integration complexity or the data quality. Ask yourself honestly whether you've led this change or only announced it.

There's a difference. Your team sees which one you're doing.

What's the biggest leadership obstacle you're seeing in your own AI adoption? I'd genuinely like to know.