The Thing Stopping AI Agents at Your Company Isn't the Technology

Every week I talk to leaders who are frustrated with AI. They bought the tools. They paid for the subscriptions. They announced the initiative at the all-hands. Six months later, nothing moved.

Most of them have the same diagnosis: the technology isn't ready. The models need more work. The integrations are too complex.

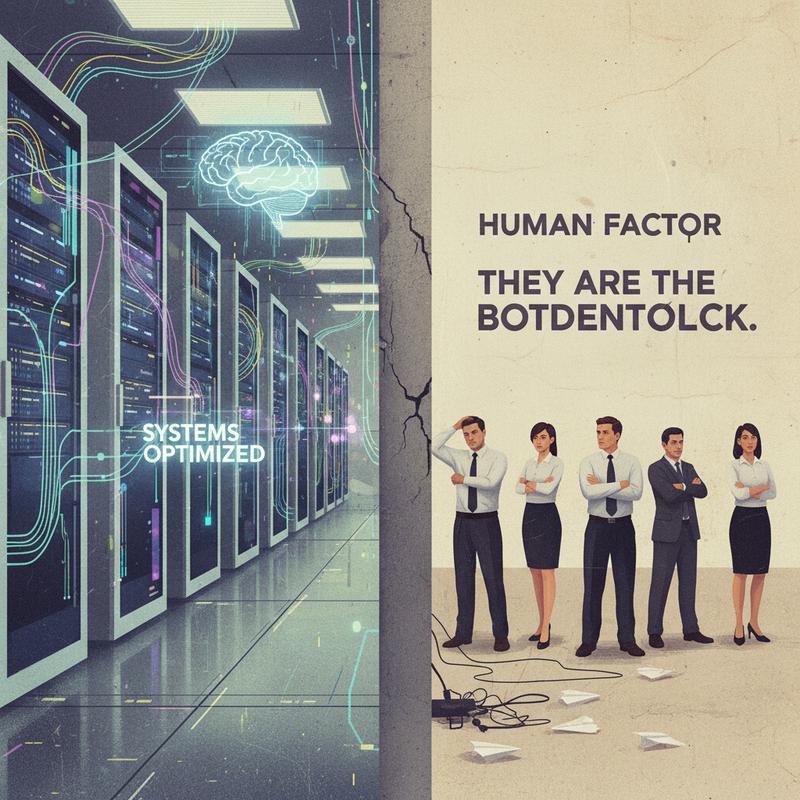

My diagnosis is different. The technology is fine. The leadership isn't.

The Numbers Are Uncomfortable

The 2026 State of AI Agents Report lists the top three blockers to enterprise AI adoption:

- Integration challenges: 46%

- Data quality requirements: 42%

- Change management needs: 39%

Read those again. All three are people problems.

Integration challenges aren't solved by better APIs. They're solved by leaders who get the right people in a room and make a decision about architecture. Data quality issues don't fix themselves. They require someone with authority to say "this is a priority and we're resourcing it." Change management? It's leadership, full stop.

MIT's State of AI in Business 2025 found 95% of enterprise AI pilots fail to deliver measurable business impact. Not because the technology broke. Because the organizations weren't ready to use it.

Only 11% of organizations are running agentic AI in production today. 38% are still piloting. The rest are stuck at "exploring." The gap between piloting and production is not a technical gap. It never was.

What Leadership Failure Looks Like in Practice

I've seen this pattern enough times to name it:

The CTO picks a vendor, signs a contract, and announces AI is coming. The team gets a demo. Someone spins up a trial account. A few early adopters use it with enthusiasm for two weeks. Then the energy fades and everyone returns to what they were doing before.

Why? Because nobody asked:

- What process are we changing?

- Who needs to work differently, and how?

- What does success look like in 90 days?

- Who is accountable for the result? One person, not a committee.

Brent Collins at Intel said it well: "Don't simply pave the cow path." Most companies deploy AI agents on top of broken processes. The agent automates the dysfunction. Then leaders wonder why the metrics are flat.

Harvard Business Review's research found 45% of executives reported AI ROI below expectations. Only 10% exceeded them. The barriers weren't technical. They were:

- Uncertainty: employees lack foundational knowledge, which creates two useless camps: people who think AI is magic and people who think it's worthless. Neither group uses it well.

- Fear of replacement: workers drag their feet on AI training when they suspect the tool is there to eliminate their jobs. Nobody explained the real purpose.

- Status loss: senior engineers hide their AI usage because it feels like admitting weakness. Experience-based authority gets threatened when a junior with the right tools outperforms a veteran.

- Resource hoarding: successful divisions keep their models and datasets locked down instead of sharing.

Not one of those is a technical problem. All of them are solvable with deliberate leadership.

The Change Management Trap

When most organizations hear "39% cite change management as a blocker," they translate it to "we need better communication." So they run a few lunch-and-learns and call it change management.

It isn't.

Real change management for AI adoption means:

Redesigning the workflow before the agent arrives. If you automate a bad process, you get a faster bad process. The ROI evaporates. Deloitte referenced Henry Ford on this point: many organizations are busy finding better ways of doing things they shouldn't be doing at all. AI amplifies this problem if you skip the design phase.

Making AI use visible and rewarded. When people hide their tool use out of shame or fear, the organization loses the feedback loop needed to improve. Celebrate what works. Name it. Share it.

Giving people permission to fail while learning. A team trying new things will make mistakes. Leaders who punish those mistakes get teams who stop trying. You will not get adoption without psychological safety around experimentation.

Connecting the initiative to outcomes employees care about. Not "the board wants AI ROI." Something like: "we want to eliminate 400 hours of data entry per month so you have time for work worth doing." The framing lands differently.

The Engineer's Role Here

If you're in an engineering leadership role, there's a specific trap worth naming: the tool-selection trap.

It's easy to spend your energy evaluating models, comparing APIs, and benchmarking latency. The work matters. But it's not the reason your AI initiative will fail or succeed.

I've worked through building AI agents with clients and partners. In roughly half the cases, the first thing a client wanted to automate was not the most valuable thing to automate. The technology was ready. The business thinking wasn't. The real work was a two-hour whiteboard session figuring out what the actual problem was... and whether an AI agent was the right solution for it at all.

It's a leadership conversation. Not an engineering one. Engineering leaders who learn to run it get far better results than those who go straight to implementation.

What the Teams Getting It Right Do Differently

The organizations getting real results from AI agents share a few things in common. None of these are difficult to understand:

They start with a problem, not a product. Not "let's use AI." Instead: "we spend 400 hours a month on X and it's costing us." Then they ask whether AI is the right solution for X.

They make the process legible before they automate it. If only one person fully understands how something works, an agent will fail inconsistently and nobody will know why. Document the process. Test it manually. Then automate.

They set specific targets. Not "use AI more." Something like: "By Q3, 80% of customer intake forms go through the agent without human review." A target you measure toward.

They assign a single owner. Not a committee. One person. Committees hold meetings. People ship.

Before You Buy Another Tool

Ask yourself one question: does your team know why you're doing this, who owns it, and what success looks like in 90 days?

If the answer involves a pitch deck and a Slack channel, you're not ready to scale. You're ready for more failed pilots.

Deloitte estimates over 40% of agentic AI projects will fail by 2027. The reason won't be the models or the APIs. It will be organizations automating the wrong things, with unprepared teams, no clear owner, and no shared definition of what winning looks like.

The technology is not your bottleneck.

If you're an engineering leader, this is your moment to step out of the tool-selection role and into the organizational design role. The teams winning with AI are the ones whose leaders asked hard questions before signing the contract.

What questions haven't you asked yet?