Gartner dropped two numbers this year, and together they tell you everything about where enterprise AI is heading.

Number one: 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. An 8x jump in under twelve months. Companies everywhere are deploying agents for scheduling, compliance checks, data processing, customer queries, recruitment screening.

Number two: 40% of agentic AI projects will be cancelled by 2027. Failures driven by escalating costs, unclear business value, and inadequate governance.

Not 4%. Not a rounding error. Forty percent.

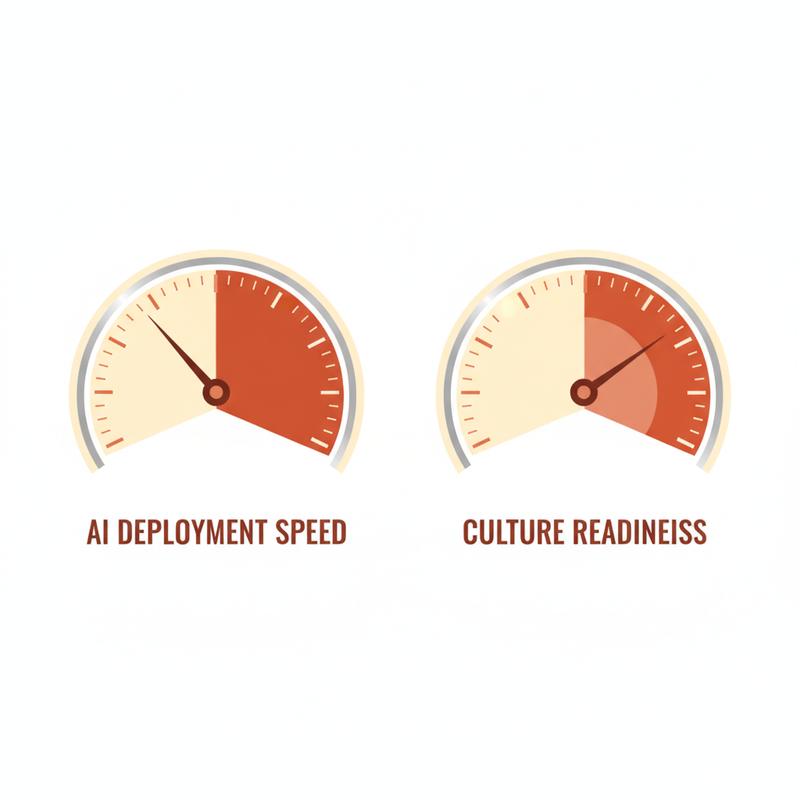

Both numbers are from Gartner. Together, they describe a perfect storm. We are racing to deploy at a pace not seen before, and simultaneously, nearly half of those deployments are going to be abandoned. Not because the technology failed. Because the organisations did.

The agents are ready. The question is whether you are.

The Trust Is Going Backwards

While deployment plans accelerate, something else is moving in the opposite direction: trust.

One year ago, 43% of business leaders said they trusted fully autonomous AI agents. Today, the number sits at 27%. A 16-point drop in twelve months, during the period of loudest AI investment announcements on record.

This is the gap nobody talks about at AI conferences. Leaders are announcing agents while quietly losing faith in them.

The research makes the picture clear: fewer than 50% of decision-makers understand AI agent capabilities well enough to assess deployment risks. Over 80% of enterprises lack mature infrastructure for governance and monitoring. And 95% of organisations report their AI initiatives produced "little to no measurable business return."

Ninety-five percent.

The technology is not the problem. We are.

What Happens When You Layer AI Onto a Broken Culture

Here is a scenario playing out in organisations right now.

Leadership announces an AI agent initiative. The technology team deploys a handful of agents: one for HR queries, one for code review, one for compliance monitoring, one for customer ticket routing. Each team builds their own. Nobody coordinates.

Three months in, you have what the industry calls agent sprawl: overlapping responsibilities, contradictory instructions, permission creep, and agents operating with no awareness of organisational priorities. The agents are not collaborating. They are competing.

McKinsey observed the core problem: agentic AI fundamentally disrupts traditional hierarchical models by distributing decisions across humans and machines. Most organisations respond by simply layering AI onto existing structures, recreating the same bottlenecks they sought to eliminate.

You do not fix a broken process by automating it. You get a faster broken process.

Agent sprawl shows up in four ways:

Duplication and conflict. Multiple agents receive overlapping mandates and generate contradictory outputs. Nobody owns the conflict resolution.

Permission creep. Agents gradually expand their scope because nobody defined the boundaries clearly at the start. By month six, the compliance agent is making decisions it was never authorised to make.

Invisible context. Agents do not absorb context through osmosis. They do not know your organisation's priorities, values, or working agreements unless you make those things explicit. Most organisations never do.

Knowledge loss. Learning stays siloed in individual agent logs rather than feeding back into organisational intelligence. You are generating data, not insight.

None of these are technology failures. Every single one is a leadership and culture failure.

What Culture Readiness Means in Practice

Culture readiness is not about whether your people are "open to AI." Research shows workers are more ready for AI agents than their organisations are. Employee resistance is not the primary adoption barrier. The bottleneck is organisational: missing governance, missing communication frameworks, missing training.

Culture readiness means three specific things.

Clarity of accountability. Every agent needs a human owner. Not a vendor, not a team, not an initiative lead. A named person, responsible for what the agent does and what happens when it goes wrong. Without this, you have deployed a decision-maker with no consequences and no oversight.

Explicit working agreements. The things humans learn through osmosis, what decisions require escalation, what the organisation values, where the real authority sits, need to be written down and given to agents as operational context. Organisations skip this step because it feels like documentation work. It is the work.

Psychological safety to flag problems. When an agent makes a bad call, the person who notices needs to feel safe saying so. In organisations where people are punished for raising problems, AI errors get buried. The agent keeps making the same bad decisions. The damage compounds.

Know Your Own Culture Before You Deploy

Here is the uncomfortable question: do you know how your team operates, or do you know how you think your team operates?

Most leaders are confident they understand their organisation's culture. Research consistently shows they are wrong. Employees and managers hold wildly divergent views on trust levels, psychological safety, and how decisions are made.

Before deploying AI agents, you need an honest picture of your own organisational behaviour. Not a survey, not a culture deck, not a workshop. Real feedback on how your leadership is landing with your team.

This is precisely why tools like StepUp2BAT exist. Behavioural awareness, understanding how your leadership is perceived and where the gaps are, is the prerequisite for any meaningful transformation. You do not ship a new operating system onto a machine you have never audited.

AI agents will amplify your culture. Get it right, and they amplify trust, speed, and clarity. Get it wrong, and they amplify confusion, conflict, and fear. The amplification is the point.

Three Questions Before Your Next Agent Deployment

The organisations seeing real results from AI agents are not the ones with the most agents. They are the ones who slowed down long enough to answer three questions:

1. Who owns this agent's decisions? Name a person. Not a team. A person.

2. What explicit context have we given this agent? Not access to your systems. Your values, your priorities, your escalation rules, written down.

3. Is our culture safe enough to catch errors early? If people are afraid to flag problems, your AI programme will fail quietly.

82% of organisations plan to integrate AI agents within the next three years. Only 14% have deployed at any meaningful scale. The gap is not a technology gap. It is a culture gap.

The agents are ready. The question is whether your organisation is ready to use them well.

Where have you seen culture readiness done right with AI? And where have you seen it go badly? I am genuinely curious.

One more thing worth saying: culture readiness is not a one-time audit. It is an ongoing posture. The organisations winning with AI agents are treating organisational behaviour with the same rigour they apply to their technical infrastructure. They are asking "how is this landing with my team?" with the same regularity they ask "how is our uptime?"

The two questions are connected. When your people trust you, your AI programme has a fighting chance. When they do not, every agent you deploy is one more source of anxiety in an environment already stretched thin.

Start with the humans. The agents will follow.